What You Feed the Model Is the Model

Table of Contents

The practitioners who consistently produce good threat models are not the ones with the most sophisticated tooling. They are the ones who are obsessive about what goes in. Get that right, and the all the rest (the methodology, the AI assist, the output format ...) will fall into place.

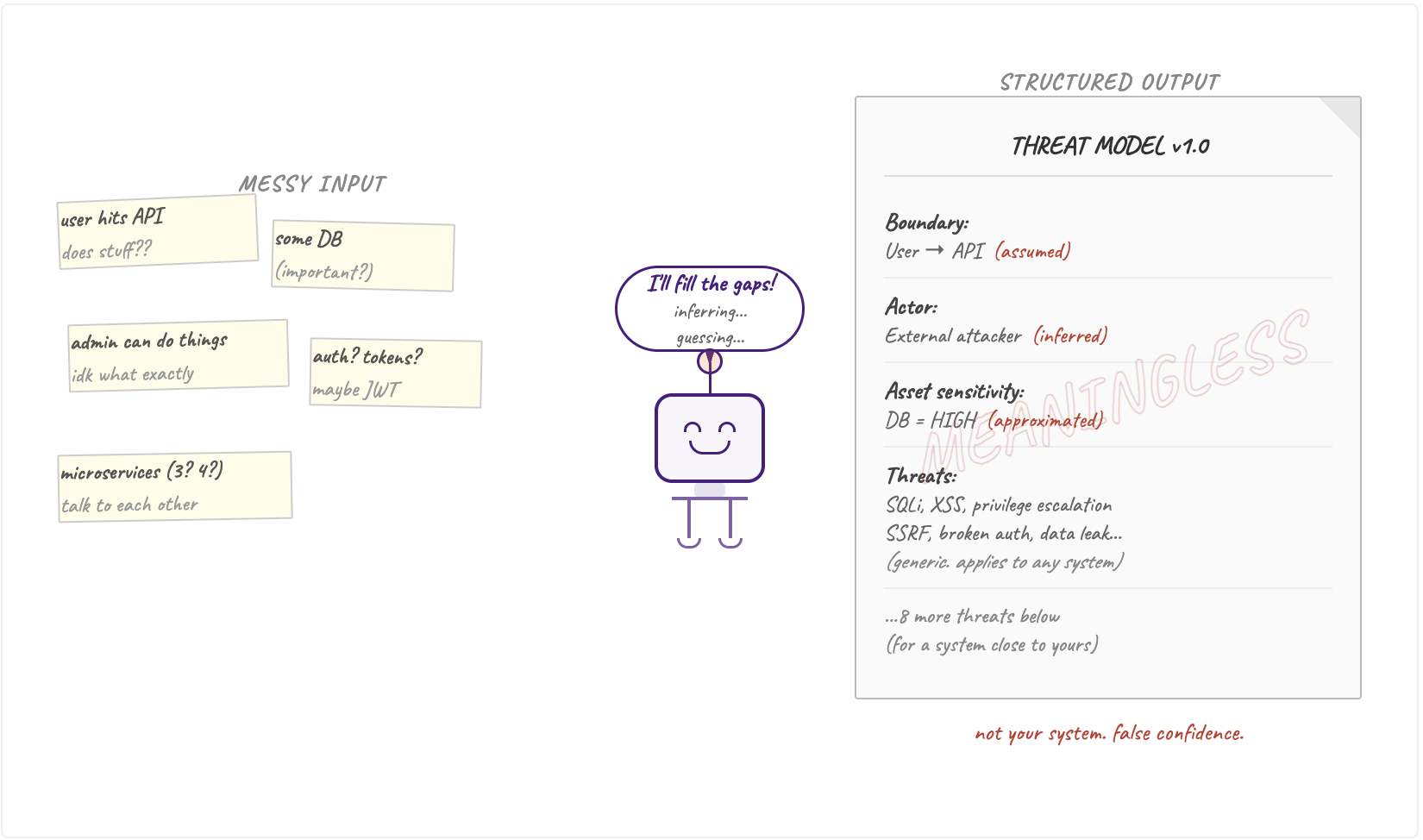

Whilst threat modeling, keep in mind the ever so meaningful expression in computer science - garbage in, garbage out (GIGO). A good human threat modeller will fill the gaps by asking questions and verifying the answers. A well meaning LLM will just assume for you - to "save you the trouble".

What You Feed the Model Is the Model

I keep hearing this a lot lately and admittedly, echo-ed it myself: any threat model is better than no threat model. I agree. This is settled.

But I'm also a perfectionist, and Adam Shostack's fourth question haunts me: did we do a good job? So rather than settling for "good enough," I've found myself on a slightly obsessive pursuit, giving LLMs the best possible chance to produce the best possible threat model. What building DevArmor has taught me is that the biggest variable in that equation isn't the type of the data flow diagram, the large language model, or the methodology.

It's the input.

Izar Tarandach and Matthew Coles make this point plainly in their book Threat Modeling: A Practical Guide for Development Teams - you cannot have a good threat model without a good model of the system. The input problem isn't new. As Izar Tarandach mentioned in one of our conversations: "One of the ways you're guaranteed to get completely authoritative and mostly unusable results is to give a bad, insufficient or uncertain system model to your LLM. You give it garbage, it will happily give you garbage back. Amplified."

The Anatomy of a Threat and Why Your Input Has to Match It

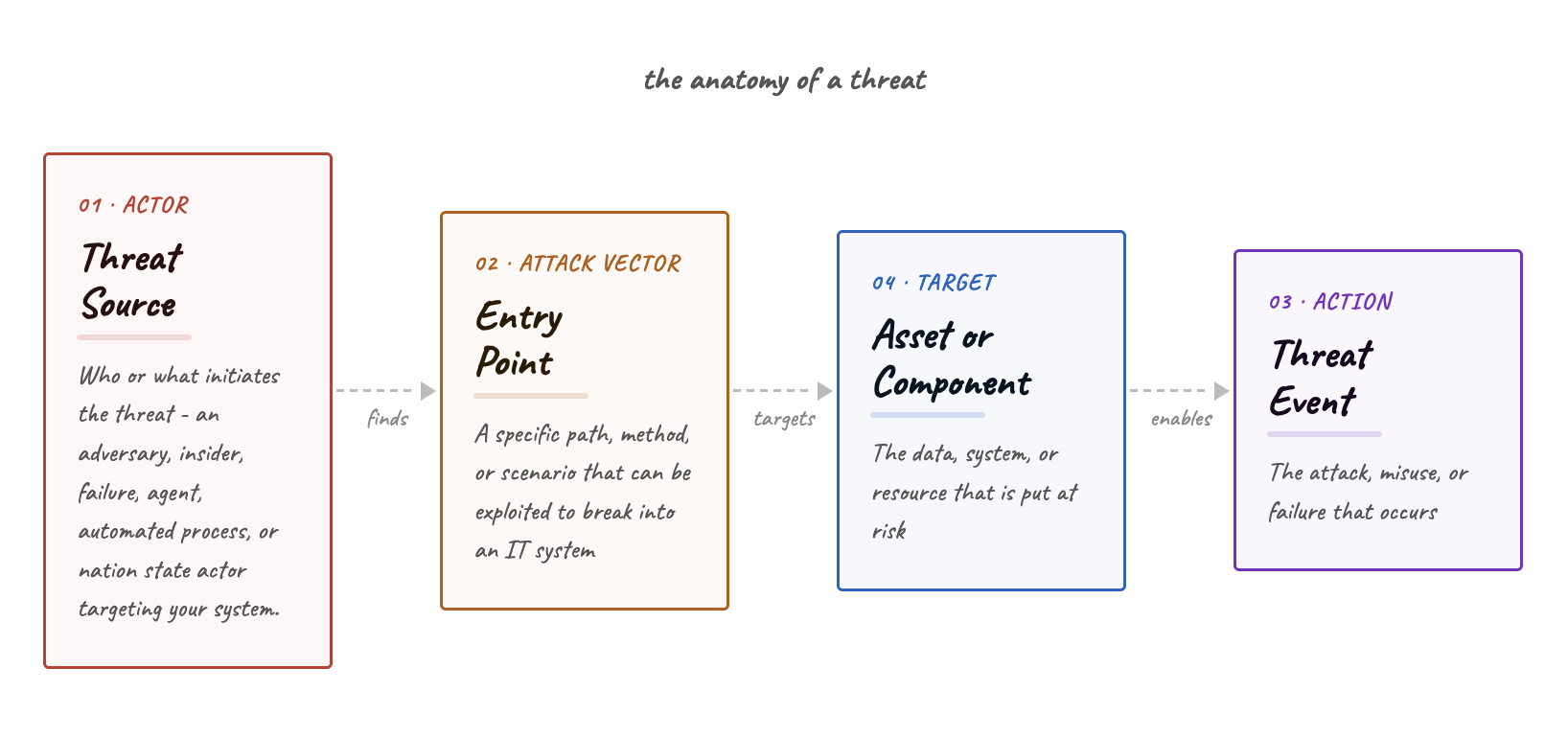

NIST SP 800-30 defines a threat in terms of two components: a threat source (who or what initiates it) and a threat event (the action they take). That's the foundational definition, and it's a seed that got planted in my head in the early days when I started my career in cyber security. Running threat modeling sessions in practice and eventually building my own threat modeling tools with DevArmor, this evolved and has grown into my own anatomy of a threat.

The model I work with now looks like this: threat source finds or exploits an entry point, which allows them to cause a threat event on a targeted asset or component. The entry point is where your trust boundaries live. The targeted asset is where your process, data storage or any other resource in your inventory are.

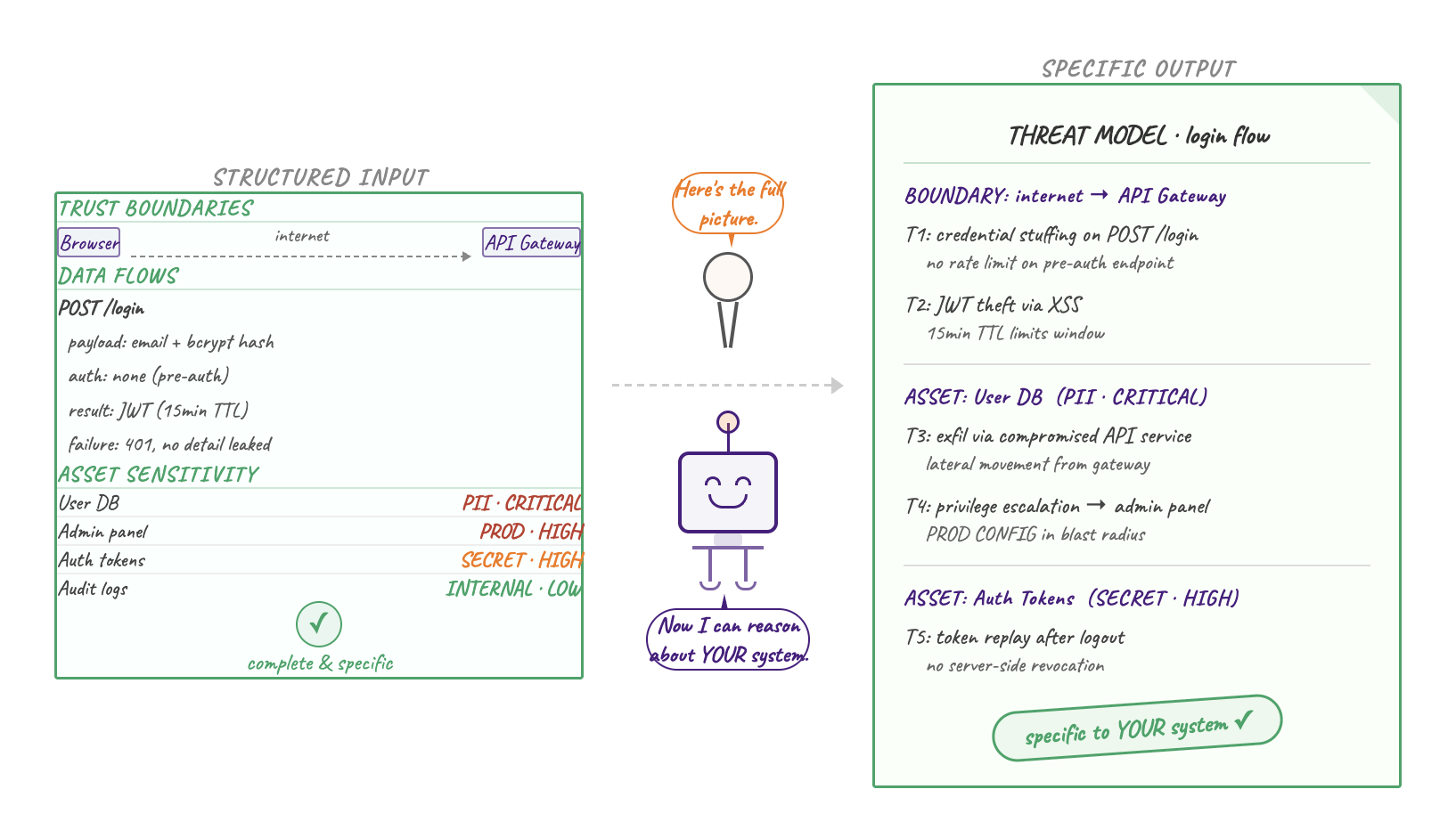

This is why structured input matters so much. For an LLM to produce threats that are specific to your system rather than generic to any system, it needs to know: who your actors are, where your trust boundaries sit, what you're storing and how sensitive it is, how data moves through the system, what controls already exist, and critically, which boundary each control is actually protecting.

"One of the ways you're guaranteed to get completely authoritative and mostly unusable results is to give a bad, insufficient or uncertain system model to your LLM. You give it garbage, it will happily give you garbage back. Amplified." - Izar Tarandach

Without this, you are not giving the LLM less to work with but gaps to fill. And it will fill them. A well-meaning model will infer actors, assume boundaries, and approximate asset sensitivity based on whatever context it can extract. The output will be coherent and structured, but it will be describing a system that is close to yours but not yours, which is exactly the kind of threat model that gives you false confidence at the worst possible moment. Or it will annoy you with its hallucinations and assumptions, that it will take you longer to correct and verify them, than it would have taken you to input the initial structured data in the first place.

This is how we structured DevArmor's input model - with the four part threat anatomy in mind as the backbone of the expected output. Our observation was that if the tool doesn't reliably elicit this kind of structure from a user's input, the threats it produced were less meaningful and specific.

What a Complete and Structured Input Actually Means

When I say structured input, I don’t just mean avoiding giving blobs to your model - I mean also being precise about the things that translate directly into threats. For instance - your trust boundaries, are they explicitly drawn? Where does control pass between components, services, or actors? Where does data cross a boundary without scrutiny? These are the load-bearing walls of any threat model. If they are implicit in your architecture diagram, understood by the team but not stated, an LLM will not reliably surface them. A human expert might ask; a model will not.

Enrich the data flows with context, don’t make them just arrows. Not "user sends request to API" but what is in the request, what credentials are attached, what the API does with it, where the result goes, and what happens if any of those steps fail. An arrow on a diagram is not a data flow, but merely a placeholder. Ensure your assets have sensitivity classifications. If the modeler, human or machine, doesn't know that your database contains PII, or that your internal admin panel can modify production configurations, it cannot reason about blast radius. It will produce generic threats that apply to any system rather than specific threats that apply to yours.

Structured, complete inputs as a first-class concern is the vision that informed every design decision we made in DevArmor. But building it taught us something about how people actually work. Not everyone wants to fill in every field before they see something useful, some want to jump straight into "the LLM magic". They try to achieve a "good enough output" as quickly as possible, and work their way back to the inputs once they can see what they're building towards. We can enable that via our iterative approach and structured input. Having said that, it is clear that if you skip the human-in-the-loop, if you let the model fill in the attributes you did not provide, the quality and accuracy will be impacted meaningfully.

Izar also proposed a similar mitigation called “plan mode”, where the model can surface the gaps in your input before they become gaps in your output. Izar Tarandach's tm_skills repo operationalizes exactly this kind of structured, question-first approach to CTM with LLMs, and is worth exploring if you are building agentic threat modeling workflows in house.

This thinking is also what sits behind two projects I have been actively contributing to: the OWASP Threat Model Library (as co-lead) and the CycloneDX Threat Modeling BOM (tm-bom). If structured input and completeness of the model is what makes better threat models and is proven useful to the tools that consume their outputs - then the schema that defines that input and output structure matters. Both projects are aligned and once the tm-bom is released, the OWASP Threat Model Library format will follow. They are both attempts to give threat model artifacts a machine-readable, interoperable format: outputs from one system can become valid, structured inputs to another, which contributes to the continuous part of continuous threat modeling.

Data Lineage and Reconciliation is Key to Model Trust

A key to model trust is also data lineage / provenance: know where each piece of information comes from, how current it is, and how authoritative it is for that aspect of the system. Then make a deliberate decision, one source of truth per concern, with others used to fill gaps rather than override. Ensure that you know the source of each piece of information in your model and findings are from. An LLM presented with conflicting inputs will not flag the inconsistency. It will synthesize them into something that sounds coherent. That's your job to prevent, the LLM will not catch this otherwise.

On the other hand, I have seen threat modelers, myself included, throw a document, some code, a diagram, and a vague brief at an LLM and call it context. It isn't. It's noise with good intentions. Before you structure anything, you need to know which of your inputs to trust. This is the key step that contributes to your threat model quality and accuracy. You almost certainly have multiple sources describing your system: a Confluence architecture doc, a Terraform plan, an OpenAPI spec, a whiteboard photo from last quarter's design session. They will contradict each other in small but meaningful ways. The architecture doc reflects the intended design. The gaps between them are often exactly where the interesting threats live. Additionally this delta can be used to determine the life stage of a threat. A threat that exists in the design but not the implementation may already be mitigated. One that exists in the implementation but not the design is worth a conversation.

LLMs Are Good at Breadth. You Are Responsible for Accuracy.

The genuine strength of LLMs in threat modeling is breadth and consistency. A well-prompted model will not forget to consider your authentication layer because it was distracted. It will apply STRIDE, LINDDUN, and attack tree thinking simultaneously without the cognitive overhead that makes human threat modelers dread their fifth session of the week. It will surface threat categories that a team without a specific background might skip entirely.

An LLM fills gaps with plausible-sounding assumptions and this is not a flaw to work around. It is a property to design for. Structure your inputs precisely, and the model's breadth becomes a genuine asset. Leave the inputs loose, and you get fast, well-formatted noise.

Kim Wuyts, whose work on LINDDUN is some of the most rigorous in the field, has consistently emphasized that privacy threat modeling in particular collapses when practitioners skip the step of properly characterizing data subjects and flows.

“Knowing and understanding the data - with that I mean the information, not just the blob of data - is really useful”. - Kim Wuyts

Research by Zhang et al. published in 2024 identifies vague and underspecified prompts as a primary cause of what the field calls "prompting-induced hallucinations" - where the model, lacking sufficient context, defaults to speculative generation. The less you tell it, the more it tries to assume. A separate study by Béchard and Ayala demonstrated that providing structured, well-organized context to an LLM significantly reduces hallucination rates in the output. The implication for threat modeling is direct: structured and specific input is the best defense we have against hallucinations, low specificity and accuracy outputs.

The same principle applies across methodologies: the model cannot reason about what it has not been told. Izar Tarandach, in his writing on CTM (continuous threat modeling) and LLMs, calls this "vibe threat modeling". The model files threats on autopilot, you accept them because they look right, and the whole thing ages badly. It is worth naming because it is genuinely easy to do. A well-structured output is visually indistinguishable from an accurate one if you don't know your own system well enough to spot the gaps.

Make the Output Part of Your Decision System

Well, all this complete structured input and output should be done with a purpose. A threat model that lives in a document is not operationalized. The actionable findings need to land somewhere: a Jira board, a risk register, a backlog item with an owner. The format depends on your organization; anything that gets meaningful security measures over the line.

Don't turn the fun and brainstorming activity of threat modeling into a hundred-page report that nobody has time to read, where the assumption is that someone will action something at some point. Put the actionable insights first. If a finding doesn't map to a decision, it isn't a finding. It's a note.

On Learning From Structured Examples

There is a reason experienced threat modelers get better over time that has nothing to do with frameworks. They have seen more systems, more failure modes, more "we thought we'd covered that" moments. That pattern recognition is hard to replicate without a large, structured dataset of real threat models to learn from.

This is part of why Julian Mehnle (DevArmor CTO) and I started the OWASP Threat Model Library. The ambition is for it to become the largest structured threat modeling dataset available. The field needs more examples, openly shared, in formats that are actually learnable from, readable by humans or machines. We are a long way from that being solved. But we are convinced it is the right direction, and that we can drag more people in with us on this journey.

Closing Thoughts

The practitioners who consistently produce good threat models are not the ones with the most sophisticated tooling. They are the ones who are obsessive about what goes in. Get that right, and the rest, framework, AI assist, output format, has something worth working with.

References

- Wikipedia, Garbage in, garbage out — https://en.wikipedia.org/wiki/Garbage_in,_garbage_out

- Wikipedia, Attack vector — https://en.wikipedia.org/wiki/Attack_vector

- Adam Shostack, Threat Modeling: Designing for Security (Wiley, 2014)

- Izar Tarandach and Matthew J. Coles, Threat Modeling: A Practical Guide for Development Teams (O'Reilly, 2021)

- Izar Tarandach, tm_skills: Agent skills to help with Continuous Threat Modeling. https://github.com/izar/tm_skills

- NIST Special Publication 800-30 Revision 1, Guide for Conducting Risk Assessments (NIST, 2012). https://nvlpubs.nist.gov/nistpubs/Legacy/SP/nistspecialpublication800-30r1.pdf

- Kim Wuyts and Wouter Joosen, LINDDUN Privacy Threat Modeling - https://www.linddun.org

- OWASP Threat Model Library - https://owasp.org/www-project-threat-model-library

- CycloneDX Threat Modeling BOM (tm-bom). machine-readable, interoperable schema for threat model artifacts: https://cyclonedx.org/capabilities/tmb/

- Zhang et al., Survey and Analysis of Hallucinations in Large Language Models (PMC, 2024) (identifies vague and underspecified prompts as a primary cause of hallucination: https://pmc.ncbi.nlm.nih.gov/articles/PMC12518350/)

- Béchard and Ayala, Reducing Hallucination in Structured Outputs via Retrieval-Augmented Generation (arXiv, 2024) demonstrates that structured, well-organized context significantly reduces LLM hallucinations: https://arxiv.org/abs/2404.08189

Table of Contents

Subscribe